Motion Generation

-

形態的に対称なロボットを対象として、安定かつロバストな学習を可能にする対称性支援型の汎用DRLフレームワークを提案している。

本フレームワークでは、環境を対称マルコフ決定過程(MDP)としてモデル化し、対称性演算子を用いて片側のベースポリシーから全身ポリシーを構成する。

さらに、結合された重要度サンプリング比を用いた対称PPO目的関数を定義している。

この目的関数はポリシー最適化過程を課された対称性と整合させるものであり、MAPPO型のマルチエージェント定式化に対する原理的な代替手法となる。

-

R. Hakoda, Y. Liu, M. Hwang, Y. Sato, J. Takamatsu, K. Ikeuchi, and T. Oishi,

"Morphological symmetry-aware generalized policy network for deep reinforcement learning,"

Frontirs in Robotics and AI, 13:1816301, 2026.

R. Hakoda, Y. Liu, M. Hwang, Y. Sato, J. Takamatsu, K. Ikeuchi, and T. Oishi,

"Morphological symmetry-aware generalized policy network for deep reinforcement learning,"

Frontirs in Robotics and AI, 13:1816301, 2026.

-

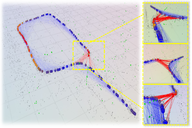

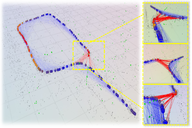

本研究ではSLAMにおけるポーズグラフの正当性を評価する手法として、ポーズグラフの位相的整合性(pose-graph topological integrity)を提案している。

従来のマップ品質評価手法は、構築された地図と実環境との間に生じる不整合を十分に捉えられない。

一方、提案手法はヒートカーネルシグネチャ(Heat Kernel Signatures, HKS)を用い、自由空間制約から導出されるサポートグラフと

ポーズグラフとの間の位相的不整合を直接定量化する。

これにより位相的整合性をマルチスケールかつ各頂点ごとに評価することが可能となる。

-

S. Xie, K. Sakurada, R. Ishikawa, M. Onishi and T. Oishi,

"PGTI: Pose-Graph Topological Integrity for Map Quality Assessment in SLAM,"

Robotics. 2025; 14(12):189.

[code]

S. Xie, K. Sakurada, R. Ishikawa, M. Onishi and T. Oishi,

"PGTI: Pose-Graph Topological Integrity for Map Quality Assessment in SLAM,"

Robotics. 2025; 14(12):189.

[code]

本研究では、球面調和(SH)に基づく高速構造表現(SH-FS)を提案している。

SH-FSは、疎な点群から構造情報を1つのベクトルとして抽出することで、疎な点群を用いた視覚的SLAMに適用することができる。

またSLAMシステムのロバスト性を向上させるために、視覚的SLAMにおける構造を考慮したループクロージング法を提案した。

カメラやスマートフォン、HMDといったSLAMデバイスをロボットに取り付けることによって簡易にナビゲーションを実現する手法を提案しています。

このナビゲーションを実現するために、ロボットの自由度に応じたモーションベースの校正手法を提案しました。

さらにロボットと環境の整合性を保つように実時間で校正パラメータを補正することで安定したナビゲーションを実現しています。

-

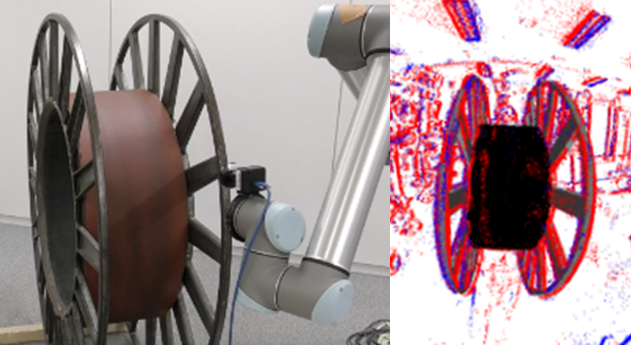

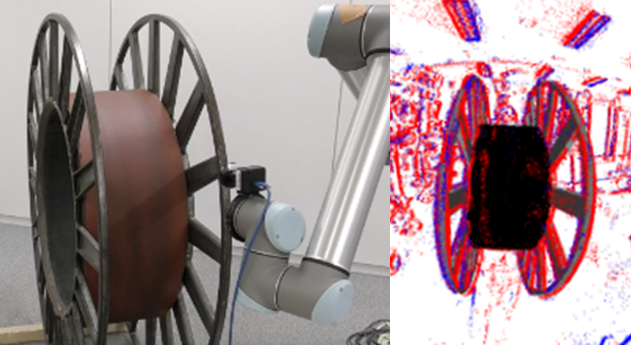

ロボットによる物体マニピュレーションのためのイベントカメラトラッキング手法を提案している。

距離場を用いて非常に高速に物体追跡する手法や、様々なレンズに対応したカメラプロジェクションモデルを採用し、

モーションブラーを考慮した誤差関数を適用したロバストな手法を提案している。

-

Y. Kang, G. Caron, R. Ishikawa, A. Escande, K. Chappellet, R. Sagawa, T. Oishi,

"Direct 3D model-based object tracking with event camera by motion interpolation,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 2645-2651.

Y. Kang, G. Caron, R. Ishikawa, A. Escande, K. Chappellet, R. Sagawa, T. Oishi,

"Direct 3D model-based object tracking with event camera by motion interpolation,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 2645-2651.

本研究では、2次元画像中の異なる4つのタイプのエッジを検出する学習ベース手法を提案している。

ネットワークアーキテクチャはSWIN Transformerをベースにし、Diceロスの導入によって細線を抽出可能である。

ロボットシステム

-

農作物の育成には農薬散布は欠かせないが、食の安全やコストの問題が大きい。

そこで不整地移動が可能な四足歩行ロボットを用いて、害虫を検出、除去するシステムを開発した。

-

S. Balasooriya, Y. Sato, T. Oishi,

"Autonomous Robotic Platform for Proximal Data Collection Amongst Foliage Utilizing an Anisotropically Flexible Manipulator,"

The 2024 16th IEEE/SICE International Symposium on System Integration (SII), Ha Long, Vietnam, 2024, pp. 1498-1503.

S. Balasooriya, Y. Sato, T. Oishi,

"Autonomous Robotic Platform for Proximal Data Collection Amongst Foliage Utilizing an Anisotropically Flexible Manipulator,"

The 2024 16th IEEE/SICE International Symposium on System Integration (SII), Ha Long, Vietnam, 2024, pp. 1498-1503.

-

H. Hansen, Y. Liu, R. Ishikawa, T. Oishi, Y. Sato,

"Quadruped Robot Platform for Selective Pesticide Spraying,"

18th International Conference on Machine Vision Applications (MVA), Hamamatsu, Japan, 2023. pp. 1-6.

H. Hansen, Y. Liu, R. Ishikawa, T. Oishi, Y. Sato,

"Quadruped Robot Platform for Selective Pesticide Spraying,"

18th International Conference on Machine Vision Applications (MVA), Hamamatsu, Japan, 2023. pp. 1-6.

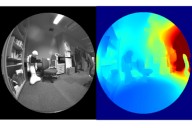

広い視野を持つドローンの特性を活かすため、地上移動体からのドローンの姿勢推定システムを開発しました。

このシステムでは、LiDARによる直接計測と、カメラによる消失方向の間接計測によって相対位置姿勢を求めています。

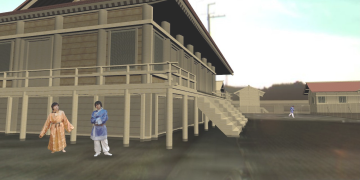

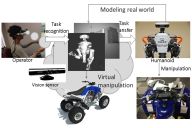

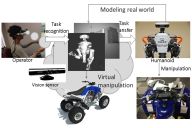

災害地域など遠隔地にいるヒューマノイドロボットをネットワークを通して操作するシステムを提案している。

本システムでは、遠隔操作における遅延や、人間とロボット間の円滑な動作伝達のために、

仮想空間によるインタフェースとタスクモデルによる操作伝達、動作生成手法を用いている。

またロボットが環境とインタラクションするために、作業対象の動作(関節)モデルを

人間の手の動きと点群の位置合わせを用いて推定する手法を提案している。

-

L. Fu, R. Ishikawa, Y. Sato and T. Oishi,

"CAPT: Category-level Articulation Estimation from a Single Point Cloud Using Transformer,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 751-757.

L. Fu, R. Ishikawa, Y. Sato and T. Oishi,

"CAPT: Category-level Articulation Estimation from a Single Point Cloud Using Transformer,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 751-757.

-

R. Hartant, R. Ishikawa, M. Roxas, T. Oishi,

"Hand-Motion-guided Articulation and Segmentation Estimation,"

The 29th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Naples Italy, 2020,

arXiv:2005.03691, 2020.

[src]

[video]

R. Hartant, R. Ishikawa, M. Roxas, T. Oishi,

"Hand-Motion-guided Articulation and Segmentation Estimation,"

The 29th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Naples Italy, 2020,

arXiv:2005.03691, 2020.

[src]

[video]

-

M. Ogawa, K. Honda, Y. Sato, T. Oishi and K. Ikeuchi,

"Development of interface for teleoperation of humanoid robot using task model method,"

2016 IEEE/SICE International Symposium on System Integration, Dec. 2016, Sapporo, Japan.

M. Ogawa, K. Honda, Y. Sato, T. Oishi and K. Ikeuchi,

"Development of interface for teleoperation of humanoid robot using task model method,"

2016 IEEE/SICE International Symposium on System Integration, Dec. 2016, Sapporo, Japan.

M. Ogawa, K. Honda, Y. Sato, S. Kudoh, T. Oishi, K. Ikeuchi,

"Motion Generation of the Humanoid Robot for Teleoperation by Task Model,"

In Proc. 24th IEEE International Symposium on Robot and Human Interactive Communication,

pp. 71-76, Sept. 1, 2015, Kobe Japan.

-

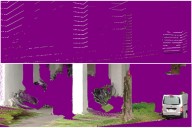

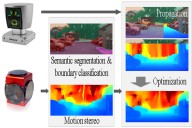

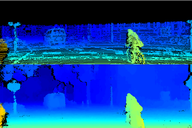

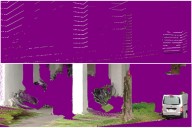

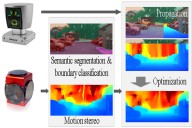

LiDARデータは高精度である一方、遠方ではデータが疎であるという問題がある。

そこで単画像或いはステレオ画像を用いてLiDARデータを高密度化する手法を開発している。

-

Y. Yao, R. Ishikawa, S. Ando, K. Kurata, N. Ito, J. Shimamura, T. Oishi,

"Non-Learning Stereo-Aided Depth Completion Under Mis-Projection via Selective Stereo Matching,"

in

IEEE Access, vol. 9, pp. 136674-136686, 2021.

Y. Yao, R. Ishikawa, S. Ando, K. Kurata, N. Ito, J. Shimamura, T. Oishi,

"Non-Learning Stereo-Aided Depth Completion Under Mis-Projection via Selective Stereo Matching,"

in

IEEE Access, vol. 9, pp. 136674-136686, 2021.

-

Y. Yao, M. Roxas, R. Ishikawa, S. Ando, J. Shimamura, and T. Oishi,

"Discontinuous and Smooth Depth Completion with Binary Anisotropic Diffusion Tensor,"

IEEE Robotics and Automation Letters, vol. 5, no. 4, pp. 5128-5135, Oct. 2020.

arXiv:2006.14374

Y. Yao, M. Roxas, R. Ishikawa, S. Ando, J. Shimamura, and T. Oishi,

"Discontinuous and Smooth Depth Completion with Binary Anisotropic Diffusion Tensor,"

IEEE Robotics and Automation Letters, vol. 5, no. 4, pp. 5128-5135, Oct. 2020.

arXiv:2006.14374

-

A. Hirata, R. Ishikawa, M. Roxas, T. Oishi

"Real-Time Dense Depth Estimation using Semantically-Guided LIDAR Data Propagation and Motion Stereo,"

IEEE Robotics and Automation Letters, vol. 4, no. 4, pp. 3806-3811, Oct. 2019.

[video]

[src (MATLAB for accuracy comparison)]

A. Hirata, R. Ishikawa, M. Roxas, T. Oishi

"Real-Time Dense Depth Estimation using Semantically-Guided LIDAR Data Propagation and Motion Stereo,"

IEEE Robotics and Automation Letters, vol. 4, no. 4, pp. 3806-3811, Oct. 2019.

[video]

[src (MATLAB for accuracy comparison)]

都市モデリング

-

広い視野を持つドローンの特性を活かすため、地上移動体からのドローンの姿勢推定システムを開発しました。

このシステムでは、LiDARによる直接計測と、カメラによる消失方向の間接計測によって相対位置姿勢を求めています。

-

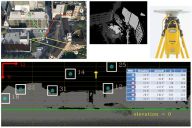

高層ビル間など都市環境においては正確な位置を計測することは困難である。

そこで周囲の3次元データを用いて、衛星の観測可能性を判別し、GPS測位の精度を

向上させる手法を提案している。

この手法では、GPSアンテナと衛星間に遮蔽物があるかを判別し、観測不可能な

衛星を測位計算から除外することで測位の高精度化を図っている。

-

GPS測位が不可能なトンネルなどの地下構造物をモデル化するフレームワークを提案している。

本フレームワークでは、LiDARによって重なりを持つ部分形状データを多数取得し、GPSデータと紐づけ

られた出口付近のデータと同時に位置合わせすることによって、整合性の取れた地球座標系での

3次元モデル取得を実現している。

-

トンネル内では、照明やインディケータなど繰り返しの構造物を検出、トラッキングすることが

車両の挙動を決定するためには重要である。

本手法では構造物の見えと動きの両方を情報を用いて安定したトラッキングを実現している。

-

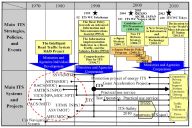

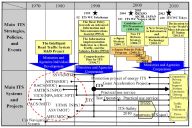

- K. Ikeuchi, T. Oguchi, M. Kuwahara, S. Ono, T. Oishi, S. Kamijo, A. Mitsuyasu, K. Koide, R. Horiguchi, M. Iijima, H. Hanabusa, M. Yoshimura, Y. Kameda, K. Mori, A. Tanaka, T. Matsunuma, H. Goto, M. Hasegawa, M. Suda, S. Sasaki, K. Kishi, S. Yorozu, H. Ichiawa, D. Oshima, Y. Tamura:

"8% Reduction of CO2 Emission by Raising Awareness of Citizens: Development and Evaluation of Regional Transport Information System for Promoting Eco-Friendly Travel Behavior",

ITS World Congress, 2015.10

K. Koide, T. Oishi and K. Ikeuchi,

"Historical Analysis of the ITS Progress of Japan,"

International Journal of Intelligent Transportation Systems Research, pp. 1-10, 2015.

K. Koide, T. Oishi and K. Ikeuchi,

"Historical Analysis of the ITS Progress of Japan,"

International Journal of Intelligent Transportation Systems Research, pp. 1-10, 2015.

R. Hakoda, Y. Liu, M. Hwang, Y. Sato, J. Takamatsu, K. Ikeuchi, and T. Oishi,

"Morphological symmetry-aware generalized policy network for deep reinforcement learning,"

Frontirs in Robotics and AI, 13:1816301, 2026.

R. Hakoda, Y. Liu, M. Hwang, Y. Sato, J. Takamatsu, K. Ikeuchi, and T. Oishi,

"Morphological symmetry-aware generalized policy network for deep reinforcement learning,"

Frontirs in Robotics and AI, 13:1816301, 2026.

S. Xie, K. Sakurada, R. Ishikawa, M. Onishi and T. Oishi,

"PGTI: Pose-Graph Topological Integrity for Map Quality Assessment in SLAM,"

Robotics. 2025; 14(12):189.

[code]

S. Xie, K. Sakurada, R. Ishikawa, M. Onishi and T. Oishi,

"PGTI: Pose-Graph Topological Integrity for Map Quality Assessment in SLAM,"

Robotics. 2025; 14(12):189.

[code]

S. Xie, R. Ishikawa, K. Sakurada, M. Onishi and T. Oishi,

"Fast Structural Representation and Structure-aware Loop Closing for Visual SLAM,"

IEEE/RSJ International Conference on Intelligent Robots (IROS), pp. 6396-6403, 2022.

[video]

[code]

S. Xie, R. Ishikawa, K. Sakurada, M. Onishi and T. Oishi,

"Fast Structural Representation and Structure-aware Loop Closing for Visual SLAM,"

IEEE/RSJ International Conference on Intelligent Robots (IROS), pp. 6396-6403, 2022.

[video]

[code]

R. Ishikawa, T. Oishi, K. Ikeuchi,

"Dynamic Calibration between a Mobile Robot and SLAM Device for Navigation,"

The 28th IEEE International Conference on Robot and Human Interactive Communication, 2019.

[src (HoloLensRobotNav)]

[src (HoloLens Robot ROSPackage]

[video(long)]

[video(short)]

R. Ishikawa, T. Oishi, K. Ikeuchi,

"Dynamic Calibration between a Mobile Robot and SLAM Device for Navigation,"

The 28th IEEE International Conference on Robot and Human Interactive Communication, 2019.

[src (HoloLensRobotNav)]

[src (HoloLens Robot ROSPackage]

[video(long)]

[video(short)]

Y. Kang, R. Ishikawa, G. Caron, T. Oishi,

"Event-based 6-DoF object tracking with distance field reaching 130 Hz,"

IEEE Robotics and Automation Letters, 2026.

Y. Kang, R. Ishikawa, G. Caron, T. Oishi,

"Event-based 6-DoF object tracking with distance field reaching 130 Hz,"

IEEE Robotics and Automation Letters, 2026.

Y. Kang, G. Caron, R. Ishikawa, A. Escande, K. Chappellet, R. Sagawa, T. Oishi,

"Direct 3D model-based object tracking with event camera by motion interpolation,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 2645-2651.

Y. Kang, G. Caron, R. Ishikawa, A. Escande, K. Chappellet, R. Sagawa, T. Oishi,

"Direct 3D model-based object tracking with event camera by motion interpolation,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 2645-2651.

L. Miao, R. Ishikawa, and T. Oishi,

"SWIN-RIND: Edge Detection for Reflectance, Illumination, Normal and Depth Discontinuity with Swin Transformer,"

British Machine Vision Conference (BMVC), 2023.

[video]

[code]

L. Miao, R. Ishikawa, and T. Oishi,

"SWIN-RIND: Edge Detection for Reflectance, Illumination, Normal and Depth Discontinuity with Swin Transformer,"

British Machine Vision Conference (BMVC), 2023.

[video]

[code]

S. Balasooriya, Y. Sato, T. Oishi,

"Autonomous Robotic Platform for Proximal Data Collection Amongst Foliage Utilizing an Anisotropically Flexible Manipulator,"

The 2024 16th IEEE/SICE International Symposium on System Integration (SII), Ha Long, Vietnam, 2024, pp. 1498-1503.

S. Balasooriya, Y. Sato, T. Oishi,

"Autonomous Robotic Platform for Proximal Data Collection Amongst Foliage Utilizing an Anisotropically Flexible Manipulator,"

The 2024 16th IEEE/SICE International Symposium on System Integration (SII), Ha Long, Vietnam, 2024, pp. 1498-1503. H. Hansen, Y. Liu, R. Ishikawa, T. Oishi, Y. Sato,

"Quadruped Robot Platform for Selective Pesticide Spraying,"

18th International Conference on Machine Vision Applications (MVA), Hamamatsu, Japan, 2023. pp. 1-6.

H. Hansen, Y. Liu, R. Ishikawa, T. Oishi, Y. Sato,

"Quadruped Robot Platform for Selective Pesticide Spraying,"

18th International Conference on Machine Vision Applications (MVA), Hamamatsu, Japan, 2023. pp. 1-6. J. Hausberg, R. Ishikawa, M. Roxas. T. Oishi,

"Relative Drone - Ground Vehicle Localization using LiDAR and Fisheye Cameras through Direct and Indirect Observations,"

arXiv:2011.07008

2020.

[video]

J. Hausberg, R. Ishikawa, M. Roxas. T. Oishi,

"Relative Drone - Ground Vehicle Localization using LiDAR and Fisheye Cameras through Direct and Indirect Observations,"

arXiv:2011.07008

2020.

[video]

L. Fu, R. Ishikawa, Y. Sato and T. Oishi,

"CAPT: Category-level Articulation Estimation from a Single Point Cloud Using Transformer,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 751-757.

L. Fu, R. Ishikawa, Y. Sato and T. Oishi,

"CAPT: Category-level Articulation Estimation from a Single Point Cloud Using Transformer,"

2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 2024, pp. 751-757.

R. Hartant, R. Ishikawa, M. Roxas, T. Oishi,

"Hand-Motion-guided Articulation and Segmentation Estimation,"

The 29th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Naples Italy, 2020,

arXiv:2005.03691, 2020.

[src]

[video]

R. Hartant, R. Ishikawa, M. Roxas, T. Oishi,

"Hand-Motion-guided Articulation and Segmentation Estimation,"

The 29th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Naples Italy, 2020,

arXiv:2005.03691, 2020.

[src]

[video]

M. Ogawa, K. Honda, Y. Sato, T. Oishi and K. Ikeuchi,

"Development of interface for teleoperation of humanoid robot using task model method,"

2016 IEEE/SICE International Symposium on System Integration, Dec. 2016, Sapporo, Japan.

M. Ogawa, K. Honda, Y. Sato, T. Oishi and K. Ikeuchi,

"Development of interface for teleoperation of humanoid robot using task model method,"

2016 IEEE/SICE International Symposium on System Integration, Dec. 2016, Sapporo, Japan. Y. Yao, R. Ishikawa, and T. Oishi,

"Stereo-LiDAR Fusion by Semi-Global Matching with Discrete Disparity-Matching Cost and Semidensification,"

in

IEEE Robotics and Automation Letters, vol. 10, no. 5, pp. 4548-4555, May 2025.

[code]

[bib]

Y. Yao, R. Ishikawa, and T. Oishi,

"Stereo-LiDAR Fusion by Semi-Global Matching with Discrete Disparity-Matching Cost and Semidensification,"

in

IEEE Robotics and Automation Letters, vol. 10, no. 5, pp. 4548-4555, May 2025.

[code]

[bib]

Y. Yao, R. Ishikawa, S. Ando, K. Kurata, N. Ito, J. Shimamura, T. Oishi,

"Non-Learning Stereo-Aided Depth Completion Under Mis-Projection via Selective Stereo Matching,"

in

IEEE Access, vol. 9, pp. 136674-136686, 2021.

Y. Yao, R. Ishikawa, S. Ando, K. Kurata, N. Ito, J. Shimamura, T. Oishi,

"Non-Learning Stereo-Aided Depth Completion Under Mis-Projection via Selective Stereo Matching,"

in

IEEE Access, vol. 9, pp. 136674-136686, 2021.

M. Roxas and T. Oishi

"Variational Fisheye Stereo,"

IEEE Robotics and Automation Letters, vol. 5, no. 2, pp. 1303-1310, April 2020. -

arXiv:1909.07545

[src]

[video]

M. Roxas and T. Oishi

"Variational Fisheye Stereo,"

IEEE Robotics and Automation Letters, vol. 5, no. 2, pp. 1303-1310, April 2020. -

arXiv:1909.07545

[src]

[video]

Y. Yao, M. Roxas, R. Ishikawa, S. Ando, J. Shimamura, and T. Oishi,

"Discontinuous and Smooth Depth Completion with Binary Anisotropic Diffusion Tensor,"

IEEE Robotics and Automation Letters, vol. 5, no. 4, pp. 5128-5135, Oct. 2020.

arXiv:2006.14374

Y. Yao, M. Roxas, R. Ishikawa, S. Ando, J. Shimamura, and T. Oishi,

"Discontinuous and Smooth Depth Completion with Binary Anisotropic Diffusion Tensor,"

IEEE Robotics and Automation Letters, vol. 5, no. 4, pp. 5128-5135, Oct. 2020.

arXiv:2006.14374

A. Hirata, R. Ishikawa, M. Roxas, T. Oishi

"Real-Time Dense Depth Estimation using Semantically-Guided LIDAR Data Propagation and Motion Stereo,"

IEEE Robotics and Automation Letters, vol. 4, no. 4, pp. 3806-3811, Oct. 2019.

[video]

[src (MATLAB for accuracy comparison)]

A. Hirata, R. Ishikawa, M. Roxas, T. Oishi

"Real-Time Dense Depth Estimation using Semantically-Guided LIDAR Data Propagation and Motion Stereo,"

IEEE Robotics and Automation Letters, vol. 4, no. 4, pp. 3806-3811, Oct. 2019.

[video]

[src (MATLAB for accuracy comparison)]

J. Hausberg, R. Ishikawa, M. Roxas. T. Oishi,

"Relative Drone - Ground Vehicle Localization using LiDAR and Fisheye Cameras through Direct and Indirect Observations,"

arXiv:2011.07008

2020.

[video]

J. Hausberg, R. Ishikawa, M. Roxas. T. Oishi,

"Relative Drone - Ground Vehicle Localization using LiDAR and Fisheye Cameras through Direct and Indirect Observations,"

arXiv:2011.07008

2020.

[video]

A. Kumar, Y. Sato, T. Oishi and K. Ikeuchi,

"Identifying Reflected GPS Signals and Improving Position Estimation Using 3D Map Simultaneously Built with Laser Range Scanner,"

13th ITS Asia-Pacific Forum, Auckland, New Zealand, April 2014.

A. Kumar, Y. Sato, T. Oishi and K. Ikeuchi,

"Identifying Reflected GPS Signals and Improving Position Estimation Using 3D Map Simultaneously Built with Laser Range Scanner,"

13th ITS Asia-Pacific Forum, Auckland, New Zealand, April 2014. L. Xue, S. Ono, A. Banno, T. Oishi, Y. Sato, K. Ikeuchi,

"Global 3D Modeling and its Evaluation for Large-Scale Highway Tunnel using Laser Range Sensor,"

In Proc. 19th ITS World Congress Vienna, Oct. 2012, Austria. (Best Paper Award)

L. Xue, S. Ono, A. Banno, T. Oishi, Y. Sato, K. Ikeuchi,

"Global 3D Modeling and its Evaluation for Large-Scale Highway Tunnel using Laser Range Sensor,"

In Proc. 19th ITS World Congress Vienna, Oct. 2012, Austria. (Best Paper Award) Z. Wang, M. Kagesawa, S. Ono, A. Banno, T. Oishi, K. Ikeuchi,

"Detection of Emergency Telephone Indicators in a Tunnel Environment,"

International Journal of Intelligent Transportation Systems Research, Jan. 2014.

Z. Wang, M. Kagesawa, S. Ono, A. Banno, T. Oishi, K. Ikeuchi,

"Detection of Emergency Telephone Indicators in a Tunnel Environment,"

International Journal of Intelligent Transportation Systems Research, Jan. 2014. K. Koide, T. Oishi and K. Ikeuchi,

"Historical Analysis of the ITS Progress of Japan,"

International Journal of Intelligent Transportation Systems Research, pp. 1-10, 2015.

K. Koide, T. Oishi and K. Ikeuchi,

"Historical Analysis of the ITS Progress of Japan,"

International Journal of Intelligent Transportation Systems Research, pp. 1-10, 2015.